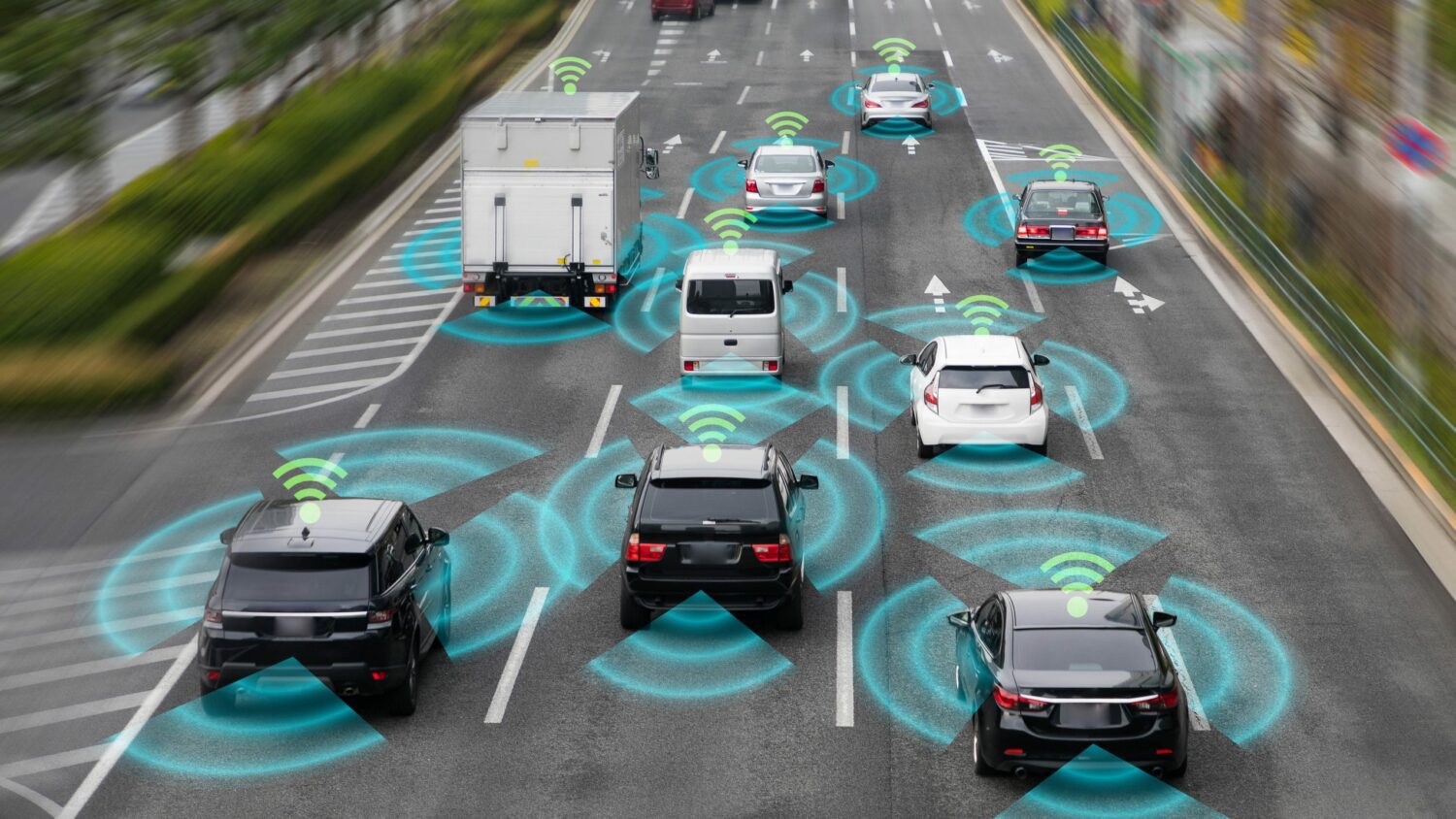

Autonomous driving cars were once the work of science fiction. Today, the idea of breaking out the laptop stand, drinking some coffee, and letting the car do the work for you during your morning commute is becoming reality. However, there are a few issues being worked out with the legality and safety of autonomous driving cars. Let’s take a look at what’s happening out there.

Liability for accidents

While one of the intents of making a self-driving car is to place the responsibility for maintaining order on the road within the hands of a carefully designed computer software program, the real world sometimes still makes an autonomous vehicle crash.

Policymakers are seeing a major issue in regard to how vehicles make safety decisions. While autonomous vehicles are expected to have a low rate of accidents per mile, the numbers aren’t there yet. Multiple sources cite the crash rate for an autonomous vehicle at 9.1 crashes per one million miles traveled while the rate for human driven vehicles is actually much lower so far at 4.1 per one million miles

Since autonomous cars will still crash regardless of our wishes, you might have to ask: Who is liable when a self-driving car crashes? This Wall Street Journal article says that people, like juries, are likely to find the vehicle at fault, and as a result, the manufacturer is at fault even if the driver, a pedestrian, or another vehicle made the mistake.

This isn’t good news for manufacturers, who would be on the hook for damages in accidents, even if their vehicle’s autonomous systems were working correctly. For example, an autonomous vehicle might be forced into a situation where avoiding an accident completely isn’t physically possible and a computer instead of the driver is deciding who or what to hit.

Unfortunately, data reports so far suggest that Tesla is responsible for nearly 70% of the crashes reported. This both indicates that Tesla vehicles are quite popular and that more work is needed to avoid crashes.

Regulation and autonomous driving

In 2022, US regulators removed the need for autonomous vehicle manufacturers to include equipment that allowed a driver to take control of the vehicle, like a driver’s seat and a steering wheel.

While federal regulators have started to move a bit, there are still state by state decisions to be made in regards to the legality of using autonomous vehicles on the road. 18 states currently allow for the use of autonomous vehicles for non-commercial purposes while others will only allow for the testing of autonomous vehicles in a commercial capacity.

You can find more NHTSA information here including a complete list of which status allow what for autonomous driving vehicles.

Data protection and privacy

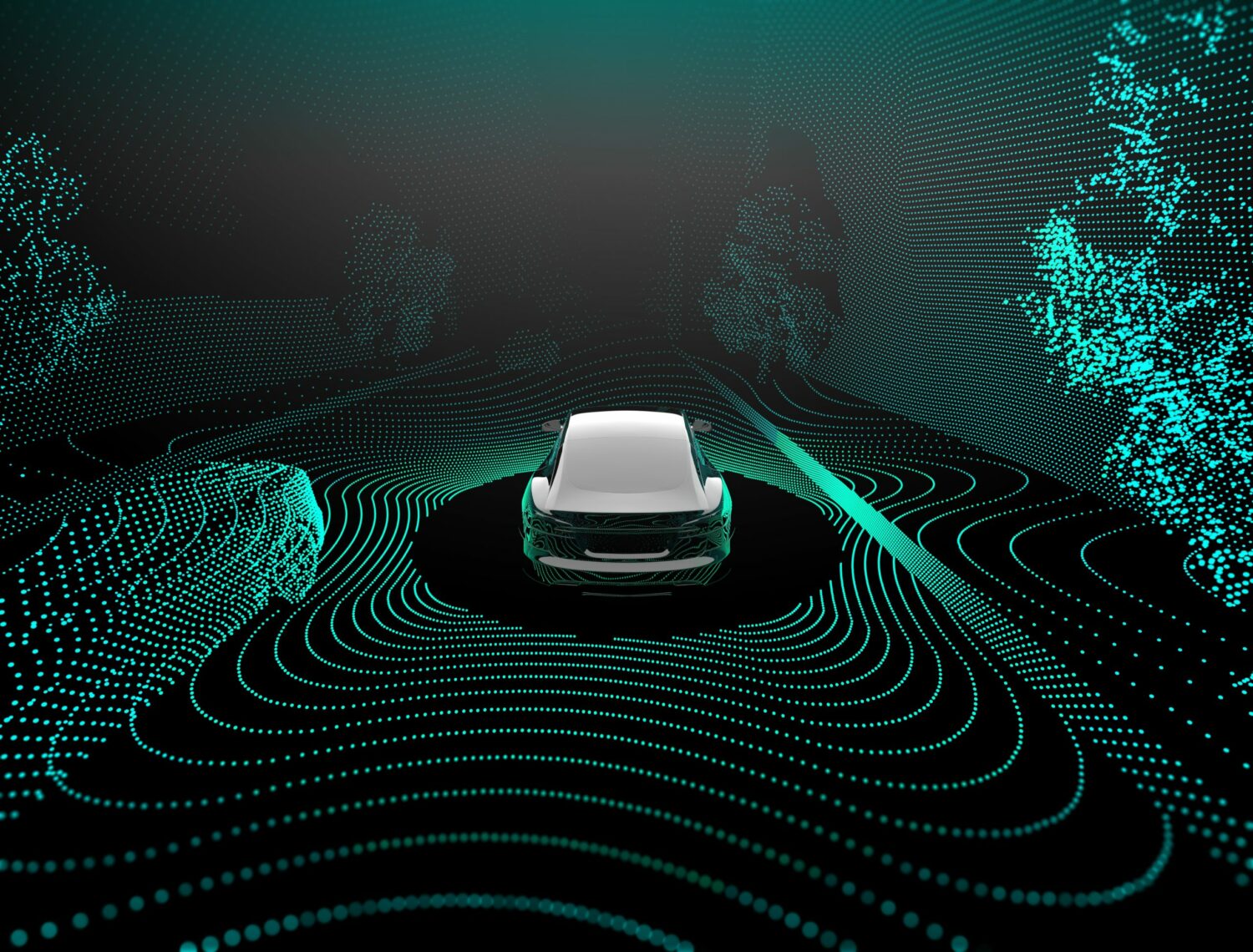

Autonomous driving involves knowing a bit of data about the driver’s location, ownership information, and even driving habits. The ability to keep this information secure is rather important, and selling it imposes the risk that marketing companies could know details about your life that are rather private.

The ability to travel in an autonomous vehicle also requires the ability to tell the vehicles where to go as inputted by a smartphone, voice command, or in-car technology. Regulators would need to decide how much privacy is warranted since the user could be asking to drive anywhere and would need to deliver that information to a privacy-enabled electronic device.

Conclusion

Autonomous vehicles are slowly becoming more mainstream. As regulators and manufacturers work out issues, you’ll see the availability of autonomous vehicles become more prevalent and hopefully become safer than traditional driver-led vehicles.